Enhancing Audio Clarity: Beamforming with the Viibe SonAQ1's 4 MEMS Microphones

Continuing our series on the cutting-edge features of the Viibe SonAQ1 Hub, today we're spotlighting beamforming—a powerful technique that leverages the device's four highly sensitive MEMS microphones to zero in on sounds from specific directions while minimizing unwanted noise from elsewhere. This capability not only enhances real-time environmental monitoring but also allows for retrospective analysis on historical data. Ideal for applications like urban noise control, wildlife tracking, or industrial diagnostics, beamforming turns the SonAQ1 into a directional "ear" for the world.

In this post, we'll explain what beamforming is, how it works with the SonAQ1's microphone array, its noise-reduction benefits, and how it can be applied to previously recorded audio.

Understanding Beamforming: The Basics

Beamforming is a signal processing method used in microphone arrays to create a virtual "beam" that focuses audio capture on a desired direction. Think of it like a spotlight in a dark room: it amplifies signals from the target area while dimming everything else. This is achieved by exploiting the spatial arrangement of multiple microphones and the time differences in how sound waves arrive at each one.

In the Viibe SonAQ1, the four MEMS (Micro-Electro-Mechanical Systems) microphones are strategically positioned in a compact array—typically in a square or tetrahedral configuration. This setup allows for both 2D (azimuth) and limited 3D (elevation) directionality, making it versatile for various environments. The onboard DSP (Digital Signal Processor) handles initial processing, but the real magic happens in the cloud or during post-analysis.

How Beamforming Works on the Viibe SonAQ1

The process starts with the raw audio signals from each of the four MEMS microphones. Sound waves from a specific direction will reach the microphones at slightly different times, depending on the array's geometry and the speed of sound (about 343 m/s).

To focus on a particular direction:

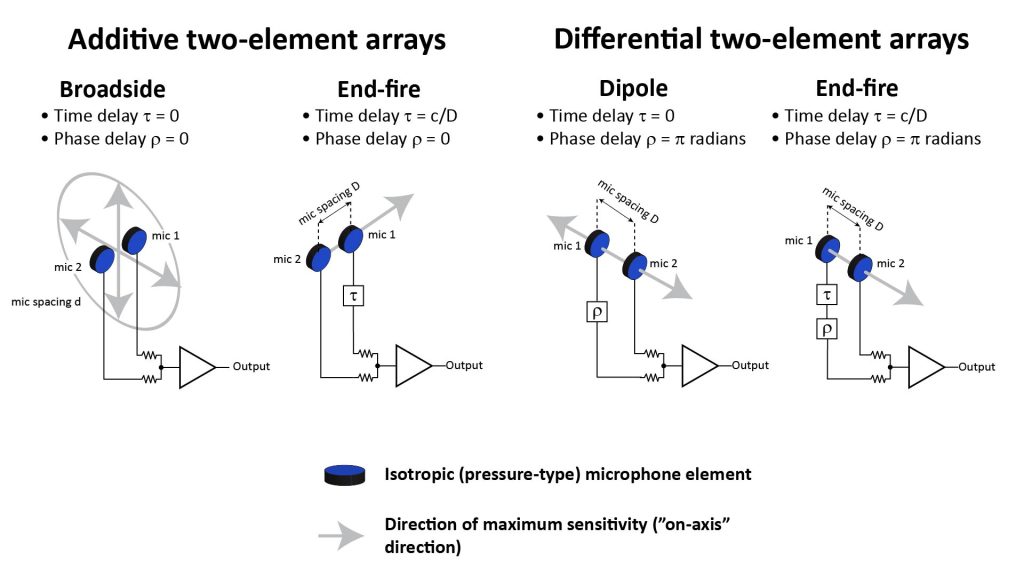

- Delay and Sum: The system applies precise time delays (or phase shifts) to the signals from each microphone. These delays are calculated based on the desired steering angle. When the delayed signals are summed, sounds from the target direction interfere constructively (adding up to a stronger signal), while those from other directions interfere destructively (canceling out).

- Adaptive Algorithms: Advanced techniques like Minimum Variance Distortionless Response (MVDR) or Generalized Sidelobe Canceller (GSC) can adaptively adjust the beam to suppress dominant noise sources. The SonAQ1's nanosecond-accurate GPS timestamping ensures synchronization, enabling accurate delay calculations even in multi-hub setups.

- Direction Steering: Users can "steer" the beam digitally—via software in the Viibe app or cloud dashboard—to focus on angles like 0° (straight ahead) or 45° off-axis, without physically moving the hub.

With hubs spaced 100m apart in a network, beamforming per hub combines with TDOA (Time Difference of Arrival) for even finer localization, as discussed in our earlier posts.

This directional focus is especially useful in noisy environments, such as cities where traffic drowns out subtle sounds like bird calls, or factories where machinery noise masks equipment faults.

Reducing Unwanted Noise from Other Directions

The true power of beamforming lies in its noise suppression. By creating nulls (directions of minimal sensitivity) perpendicular to the beam, the SonAQ1 can reduce interference by 10-20 dB or more, depending on the setup. For example:

- In a park, steer the beam toward a bird nesting area to isolate chirps while nulling out distant road noise.

- In an industrial site, focus on a specific machine to detect anomalies, ignoring echoes from walls or other equipment.

This spatial filtering outperforms traditional noise cancellation, as it's direction-specific rather than frequency-based alone. Combined with AI spectrogram analysis (from our previous blog), it enhances sound identification by providing cleaner inputs, leading to more accurate categorizations and heat maps of pleasant vs. offensive soundscapes.

Applying Beamforming Historically on Recorded Data

One of the SonAQ1's standout features is its ability to apply beamforming retrospectively on stored audio. Since the hub records raw, multi-channel audio (from all four microphones) with precise timestamps and uploads it to the cloud (via AWS S3), you don't need to decide the beam direction in real-time.

How it works historically:

- Data Retrieval: Pull archived audio from the cloud storage.

- Post-Processing: Use software tools or Viibe's cloud-based algorithms (powered by AWS Lambda and SageMaker) to apply beamforming filters. Specify new steering angles or adaptive modes to re-analyze the data.

- Re-Analysis: Generate updated spectrograms, identifications, or localizations. For instance, if an unusual noise was recorded last week, steer beams in different directions to isolate its source after the fact.

- Benefits: This flexibility is invaluable for investigations, like reviewing environmental incidents or refining AI models with cleaner historical datasets.

By processing offline, you can experiment with various parameters without losing original data, making the SonAQ1 a tool for both live monitoring and forensic audio analysis.

The Viibe Advantage: Clearer Insights for a Better World

Beamforming with the Viibe SonAQ1's four MEMS microphones elevates environmental monitoring from passive listening to active, directional intelligence. It reduces noise clutter, sharpens focus, and unlocks historical insights—all in a compact, easy-to-deploy hub.

How could you use this in your projects? Let us know in the comments! For more details on the SonAQ1, head to our product page. Next up: Integrating air quality data with sound analysis. Stay tuned and keep vibing!